Enabling Data-Driven Discovery at Scale

Marlowe is Stanford's first GPU-based computational instrument: 248 NVIDIA H100 GPUs powering frontier AI research across all seven schools, managed by Stanford Data Science.

GPU-Based Computational Instrument

Named after Philip Marlowe, the film noir detective, Marlowe is designed to give faculty the infrastructure to train foundation models, run large-scale simulations, and pursue computational work at scales previously available only to industry.

A team of Research Data Scientists partners directly with faculty to optimize code, scale training across multiple nodes, and maximize the scientific return from every GPU-hour allocated.

- Partner with faculty to design and execute GPU-accelerated research

- Optimize training pipelines for multi-node scaling

- Integrate open science practices into computational research

- Provide technical consulting on model architecture and distributed training

New to Marlowe?

New PIs get 5,000 free GPU-hours.

Latest Updates

View all →Apply for Access

5,000 free GPU-hours for new PIs. Get started with your first allocation!

Technical Specs

248 H100 GPUs, InfiniBand NDR, 5.5 PB storage.

Documentation

Getting started, multi-node training, Slurm scheduling.

Cite Marlowe

Using Marlowe in your research? Please acknowledge and cite the instrument.

Research Spotlights

View all spotlights →Faculty from across Stanford are using Marlowe to train foundation models, perform computations and simulations at scales previously available only to industry.

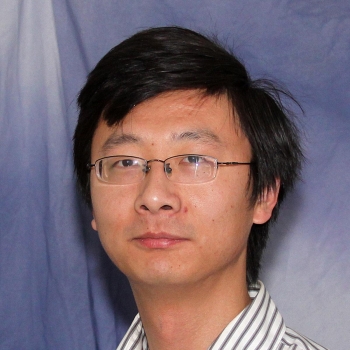

Dan Yamins

Associate Professor of Psychology and Computer Science

School of Humanities & Sciences

Counterfactual World Modeling: Training Brain-Scale Neural Networks

Validated 80B-parameter brain-inspired neural network training on Marlowe in a February 2026 proof-of-concept. Now training PSI2-30B — a 30-billion-parameter counterfactual world model that learns to predict how the physical world changes in response to actions — in a dedicated 30-day campaign across 24 nodes, the first hero run on Marlowe.

Read full story →

Andreas Tolias

Professor of Ophthalmology and of Electrical Engineering

School of Medicine

The Enigma Project: First Foundation Model and Digital Twin of the Brain

Trained the first foundation model of mammalian visual cortex on Marlowe — a 2B-parameter multimodal transformer on recordings from 3 million neurons across 330 mice, establishing the first-ever scaling laws for neuroscience. Now scaling to build a digital twin of the primate brain with up to 1 trillion tokens of neural data across 128 GPUs. One of Marlowe's earliest and most active research groups.

Read full story →

Jure Leskovec

Professor of Computer Science

School of Engineering

AI Virtual Cell: Genomic Foundation Models at Frontier Scale

Building the molecular foundation of the AI Virtual Cell — novel architectures designed for biological sequences (DNA, RNA, proteins) with biologically informed inductive biases, not adaptations of existing language models. Currently at 770M parameters with demonstrated scaling laws, targeting 3B-15B for open-source release comparable to ESM-3 (Science) and EVO-2 (Nature).

Ruijiang Li

Associate Professor of Radiation Oncology

School of Medicine

Virtual Cell World Models for Cancer Biology

Published a vision-language foundation model in Nature (January 2025) for cancer diagnosis and predicting therapeutic response. Now building a generative world model for virtual cells on Marlowe — multi-scale AI that simulates from molecular interactions to tumor microenvironment evolution — integrating histopathology images, clinical notes, spatial transcriptomics, and over 400 million medical images at billion-parameter scale.

In the Press

CoDa marks new era for computing and data science at Stanford (Stanford Report, February 2025)

Stanford welcomes first GPU-based supercomputer (Stanford Report, December 2024)